EN:Evaluation: Difference between revisions

No edit summary |

|||

| (3 intermediate revisions by 2 users not shown) | |||

| Line 3: | Line 3: | ||

For a [[EN:Network|network]] or [[EN:Monitoring Task|monitoring task]] individual evaluation criteria can be created and used.<br /> | For a [[EN:Network|network]] or [[EN:Monitoring Task|monitoring task]] individual evaluation criteria can be created and used.<br /> | ||

It is also possible to create multiple evaluation criteria which then form an average value.<br /> | It is also possible to create multiple evaluation criteria which then form an average value.<br /> | ||

Within the search you can [[EN:Search_for_evaluation|search]] for existing evaluations.<br /> | |||

== Assign evaluation == | == Assign evaluation == | ||

| Line 40: | Line 41: | ||

The US evaluation is higher than the latest EP evaluation. <br/> | The US evaluation is higher than the latest EP evaluation. <br/> | ||

Therefore, the evaluation of the US document is displayed as the "most relevant" evaluation of the family. <br/> | Therefore, the evaluation of the US document is displayed as the "most relevant" evaluation of the family. <br/> | ||

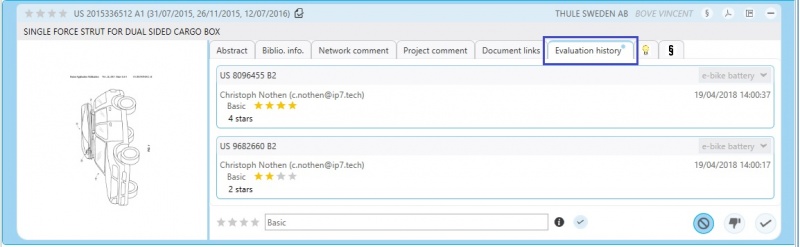

== Evaluation history == | |||

[[File:evalHistory.jpg|800px|right]] | [[File:evalHistory.jpg|800px|right]] | ||

All evaluations assigned the past are saved in the evaluation history. <br />Regardless of which project the user has opened, all evaluations from all projects / monitoring tasks are always displayed. <br/>The respective history can be opened in the result list or detail view. <br /> | All evaluations assigned in the past are saved in the evaluation history. <br /> | ||

Regardless of which project the user has opened, all evaluations from all projects / monitoring tasks are always displayed. <br/>The respective history can be opened in the result list or detail view. <br /> | |||

A blue dot in the result list indicates that there are entries in the history. <br /> | A blue dot in the result list indicates that there are entries in the history. <br /> | ||

It can therefore be seen at a glance whether a patent has already been evaluated in another project. <br /> [[File:EvalHisRes.jpg|800px]] | It can therefore be seen at a glance whether a patent has already been evaluated in another project. <br /> [[File:EvalHisRes.jpg|800px]] | ||

| Line 71: | Line 75: | ||

[[File:evalAverage1.jpg|600px]] | [[File:evalAverage1.jpg|600px]] | ||

As soon as an | As soon as an evaluation was made using the 3 criteria the average value is calculated and displayed:<br /> | ||

[[File:evalAverage2.jpg|600px|]] | [[File:evalAverage2.jpg|600px|]] | ||

Latest revision as of 10:44, 6 July 2021

For the evaluation of patents a scale of stars is available.

Within a search project an evaluation criterion "Basic" with up to 4 Stars is available.

For a network or monitoring task individual evaluation criteria can be created and used.

It is also possible to create multiple evaluation criteria which then form an average value.

Within the search you can search for existing evaluations.

Assign evaluation

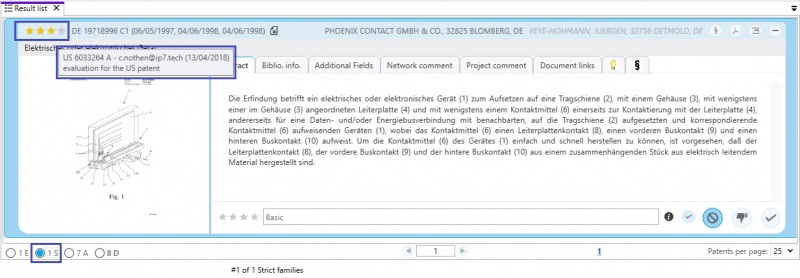

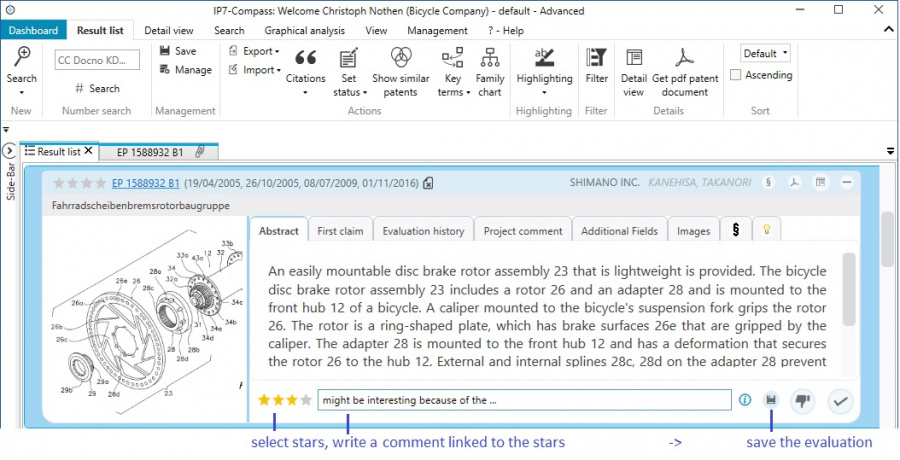

Patents can be evaluated in the result list as well as in detail view.

Evaluation within a search project in the result list.

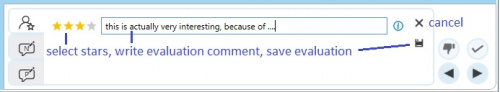

In detail view a new evaluation can be assigned by using the Plus-Icon.

Subsequently the new evaluation is created similarly to the result list.

Read evaluations

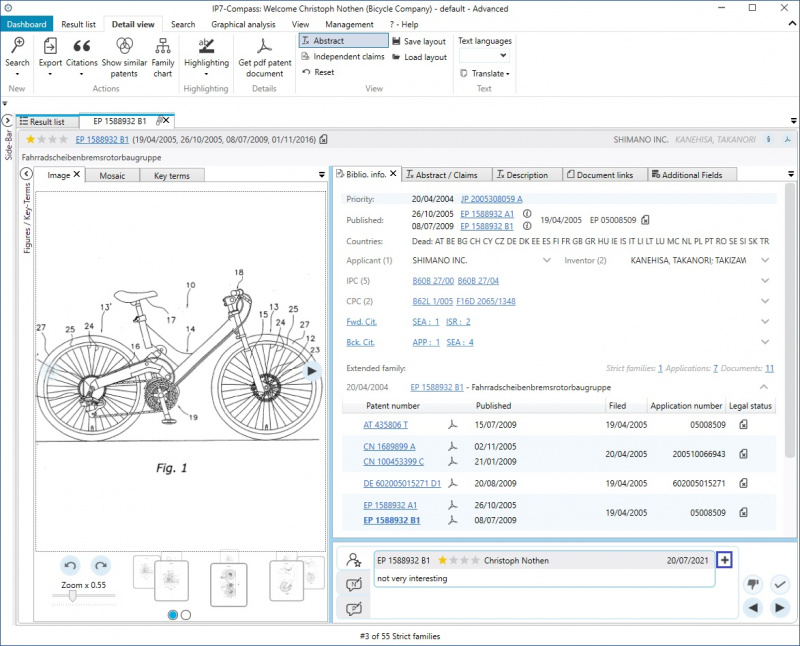

Evaluations that have already been assigned are displayed in the result list, detailed view and evaluation history.

Which evaluation is displayed in the result list and detailed view depends on the currently open project / task.

The set base of the result list also plays an important role.

If the result list is, for example, based on "strict family" (S), an evaluation of another family member is visible in the result list.

In this example the displayed DE document is not evaluated, however the evaluation of the US-A document is displayed.

(since both documents are in the same strict family)

If evaluations have been assigned for several documents of the same family, the "most relevant" evaluation is displayed.

The "most relevant" evaluation is determined as follows:

The latest evaluations from all applications of the patent family are determined.

From this, the highest evaluation is then taken.

The latest evaluation of the application for the EP document is therefore the evaluation of B1.

The US evaluation is higher than the latest EP evaluation.

Therefore, the evaluation of the US document is displayed as the "most relevant" evaluation of the family.

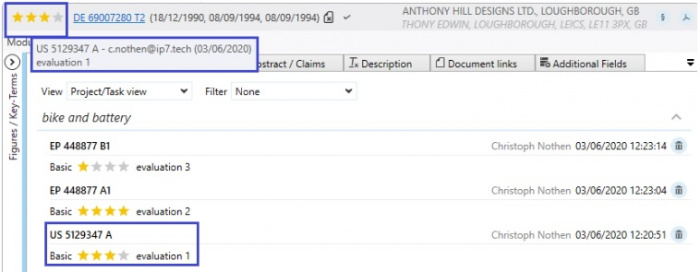

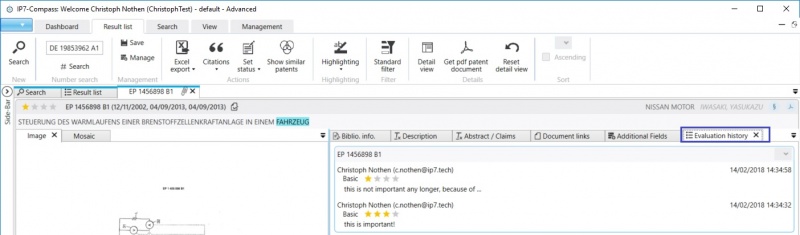

Evaluation history

All evaluations assigned in the past are saved in the evaluation history.

Regardless of which project the user has opened, all evaluations from all projects / monitoring tasks are always displayed.

The respective history can be opened in the result list or detail view.

A blue dot in the result list indicates that there are entries in the history.

It can therefore be seen at a glance whether a patent has already been evaluated in another project.

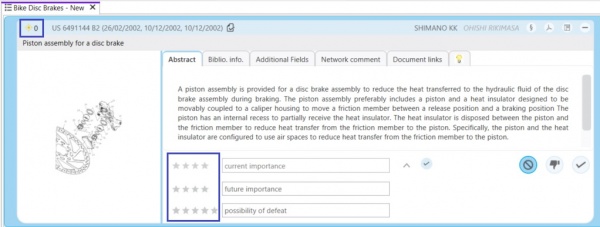

Average value when using multiple evaluations

Once multiple evaluation criteria are set up in a monitoring task, an average value of the assigned evaluations is automatically created.

Example

The 3 following evaluation criteria were created:

- current importance

- important for current projects?

- Weighting(value) 100

- future importance

- important for future projects?

- Weighting(value) 50

- possibility of defeat

- how difficult will it be to create a workaround solution?

- Weighting(value) 80

Within the monitoring tasks these 3 criteria can be used for the evaluation.

The average value is displayed left of the patent number and is initially set to 0.

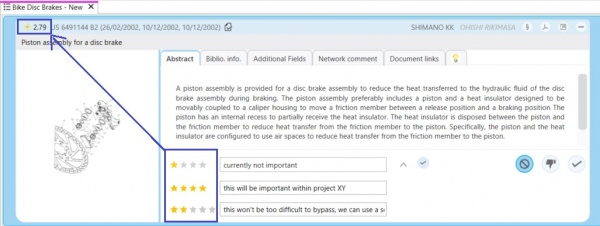

As soon as an evaluation was made using the 3 criteria the average value is calculated and displayed:

The average value is calculated in the following manner:

First, the weightings of the criteria are added.

This results in a maximum weighting of 230

The relevance for each evaluation is calculated using the following formula:

(selected stars / maximum stars) * (weighting / maximum weighting)

- current importance - (1/4) * (100/230) = 0,1087

- future importance - (4/4) * (50/230) = 0,2174

- possibility of defeat - (2/5) * (80/230) = 0,1391

Finally, these values are added and multiplied by 6.

Thus results: 0,4652 * 6 = 2,79